Typos that omit security features and how to test for them

During a security audit, I discovered an easy-to-miss typo that unintentionally failed to enable _FORTIFY_SOURCE, which helps detect memory corruption bugs in incorrectly used C functions. We searched, found, and fixed twenty C and C++ bugs on GitHub with this same pattern. Here is a list of some of them related to this typo:

- microsoft/binskim#777

- PowerShell/PowerShell-Native#88

- apple-open-source/macos#3: Though this is an unofficial fork, so I reported this further in Apple’s Feedback Assistant

- trailofbits/cb-multios#96 (Yeah, we also had this issue!)

- lavabit/libdime#49

- lavabit/magma#155

- Jackysi/advancedtomato#454

- adaptivecomputing/torque#474

- gstrauss/mcdb#14

- Homegear/Homegear#364

- sergey-dryabzhinsky/dedupsqlfs#235

- randlabs/algorand-windows-node#5

- rpodgorny/unionfs-fuse#131

- cgaebel/pipe#15

- jkrh/kvms#48

- angaza/nexus-embedded#8

- hashbang/book#24

We’ll show you how to test your code to avoid this issue that could make it easier to exploit bugs.

How source fortification works

The source fortification is a security mitigation that replaces certain function calls with more secure wrappers that perform additional runtime or compile-time checks.

Source fortification is enabled by defining a special macro, “_FORTIFY_SOURCE=”, with a value of 1, 2, or 3 and compiling a program with optimizations. The higher the value, the more functions fortified or checks performed. Also, the libc library and compiler must support the source fortification option, which is the case for glibc, Apple Libc, gcc, and LLVM/Clang, but not musl libc and uClibc-ng. The implementation specifics may also vary. For example, level value 3 was only recently added in glibc 2.34, but it does not seem to be available in Apple Libc.

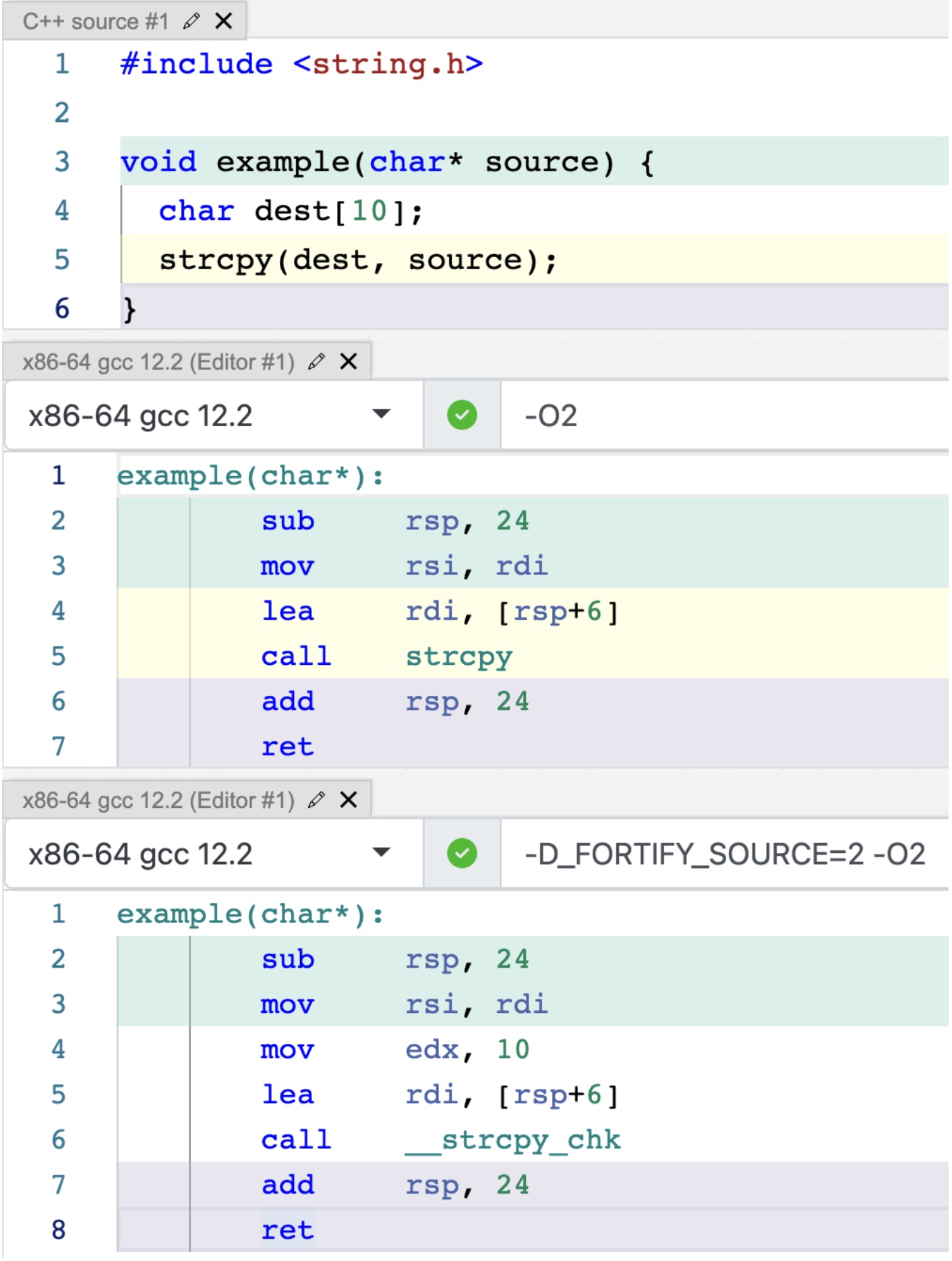

The following example shows source fortification in action. Whether or not we enable the mitigation, the resulting binary will call either the strcpy function or its __strcpy_chk wrapper:

__strcpy_chk wrapper function is implemented by glibc (source):/* Copy SRC to DEST and check DEST buffer overflow*/

char * __strcpy_chk (char *dest, const char *src, size_t destlen) {

size_t len = strlen (src);

if (len >= destlen)

__chk_fail ();

return memcpy (dest, src, len + 1);

}

__strcpy_chk function from glibc__chk_fail function, which aborts the process. Figure 1 shows that the compiled code passes the correct length of the dest destination buffer in the mov edx, 10 instruction.Tpying is hard

Since a preprocessor macro determines source fortification, a typo in the macro spelling effectively disables it, and neither the libc nor the compiler catches this issue, unlike typos made in other security hardening options enabled with compiler flags instead of macros.

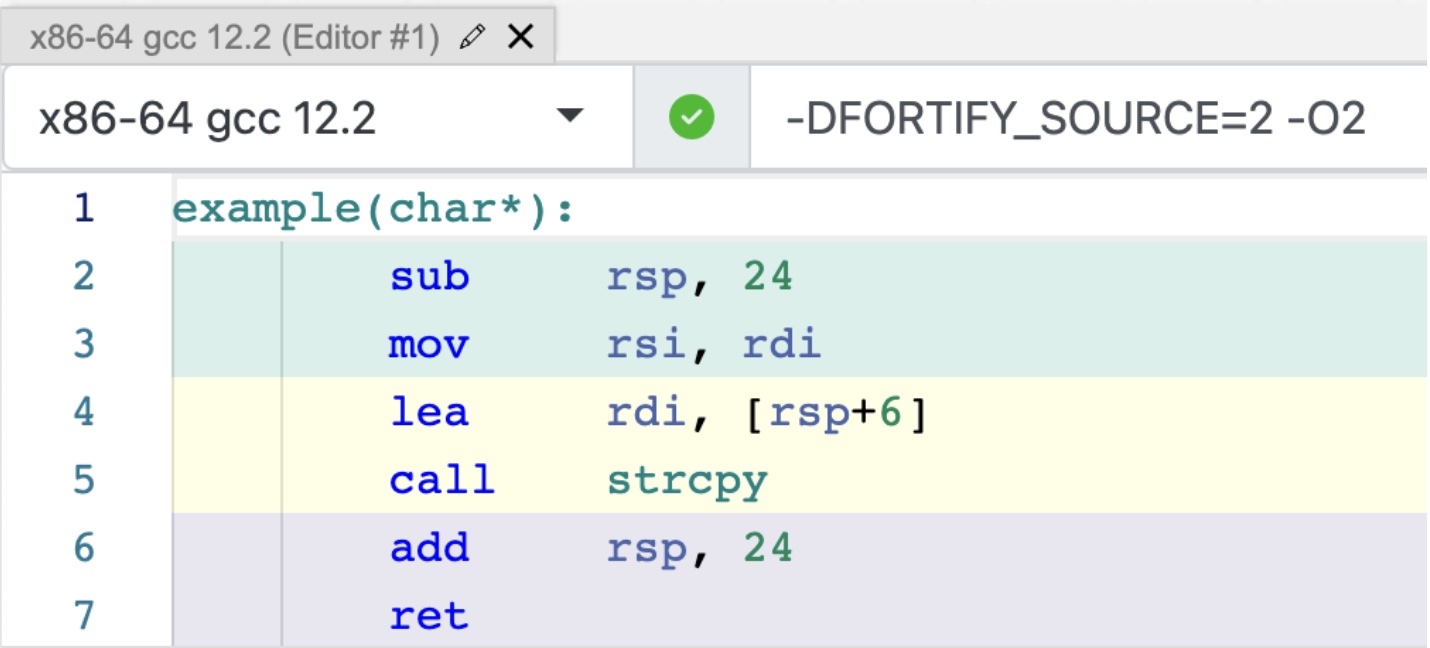

Effectively, if you pass in “-DFORTIFY_SOURCE=2 -O2” instead of “-D_FORTIFY_SOURCE=2 -O2” to the compiler, the source fortification won’t be enabled, and the wrapper functions will not be used:

- kometchtech/docker-build#50 used “-FORTIFY_SOURCE=2 -O2”. This is not detected as a compiler error because it is a “-F<dir>” flag, which sets “search path for framework include files.”

- ned14/quickcpplib#37 had a typo in the “-fsanitize=safe-stack” compiler flag. Although compilers detect such a typo, the flag was used in a CMake script to determine if the compiler supports the safe stack mitigation. The CMake script never enabled this mitigation because of this typo. I found this case thanks to my colleague, Paweł Płatek, who suggested checking whether compilers detect typos in security-related flags. Although they do, flag typos may still cause issues during compiler feature detection.

- OpenImageIO/oiio#3729 was an invalid report/PR since the “-DFORTIFY_SOURCE=2” option provided a value for a CMake variable that eventually led to setting the proper _FORTIFY_SOURCE macro. (However, that is still an unfortunate CMake variable name.)

The three code search tools I used can find more cases like this, but I didn’t send PRs to all of them, like when a project seemed abandoned.

Testing _FORTIFY_SOURCE mitigation

In addition to testing code during continuous integration, developers should also test the results of build systems and the options they have chosen to enable. Apart from helping to detect regressions, this can also help understand what the options really do, like when source fortification is disabled when optimizations are disabled.

So, how do you see if you enabled source fortification correctly? You can scan the symbols used by your binary and ensure that the fortified source functions you expect to be used are really used. A simple Bash script like the one shown below can achieve this:

if readelf --symbols /bin/ls | grep -q ' __snprintf_chk@'; then

echo "snprintf is fortified";

else

echo "snprintf is not fortified";

fi

Before using a tool, it’s a good idea to double-check if it works properly. As referenced in the above list of bugs, BinSkim had a typo in its recommendations text. Another bug, this time in checksec.sh, resulted in incorrect results in “Home Router Security Report 2020.” What was the reason for the bug in checksec.sh? If a scanned binary used stack canaries, the “__stack_chk_fail” symbol (used to abort the program if the canary was corrupted) incorrectly accounted for source fortification. This is because checksec.sh looked for a “_chk” string in the output of the readelf –symbols command, instead of expecting that the symbol name suffix matches the “_chk” string. This bug appears to be fixed after the issues reported in slimm609/checksec.sh#103 and slimm609/checksec.sh#130 were resolved.

It is also worth noting that both BinSkim and checksec.sh can tell you how many fortifiable functions there are vs. how many are fortified in your binary. How do they do that? BinSkim keeps a hard-coded list of fortifiable function names deduced from glibc, and checksec.sh scans your own glibc to determine those names. Although this can prevent some false positives, those solutions are still imperfect. What if your binary is linked against a different libc or, in the case of BinSkim, what if glibc added new fortifiable functions? Last but not least, none of the tools detect the actual fortification level used, but perhaps that only impacts the number of fortifiable functions. I am not sure.

Fun fact: Typo in Nginx

During this research, I also found out that the Nginx package from Debian had this kind of typo bug in the past. Currently, the Nginx package uses a dpkg-buildflags tool that provides the proper macro flag:

$ dpkg-buildflags --get CPPFLAGS -Wdate-time -D_FORTIFY_SOURCE=2 $ dpkg-buildflags --get CFLAGS -g -O2 -fdebug-prefix-map=/tmp/nginx-1.18.0=. -fstack-protector-strong -Wformat -Werror=format-security

It is weird that the source fortification and optimization flags are separated into CFLAGS and CPPFLAGS. Wouldn’t some projects use one but not the other and miss some of the options? I haven’t checked that.

Some wishful thinking

In an ideal world, a compiler would automatically include information about all necessary security mitigations and hardening options in the generated binary. However, we are limited by the incomplete information we must work with.

When testing your build system, there doesn’t seem to be a silver bullet, especially since not all security mitigations are straightforward to check, and some may require analyzing the resulting assembly. We haven’t analyzed the tools exhaustively, but we would probably recommend using checksec.rs or BinSkim for Linux and winchecksec for Windows. We also plan to extend Blight, our build instrumentation tool, to find the mistakes described in this blog post during build time. Even so, it probably still makes sense to scan the resulting binary to confirm what the compiler and linker are doing.

Finally, contact us if you find this research interesting and you want to secure your software further, as we love to work on hard security problems.