Manticore GUIs made easy

Trail of Bits maintains Manticore, a symbolic execution engine that can analyze smart contracts and native binaries. While symbolic execution is a powerful technique that can augment the vulnerability discovery process, it requires some base domain knowledge and thus has its own learning curve. Given the plethora of ways in which a user can interact with such an engine and the need for frequent context switching between a disassembler and one’s terminal or script editor, integrating symbolic execution into one’s workflow can be daunting for a beginner.

One of the ways Trail of Bits has sought to ease this process is by making graphical user interfaces (GUIs) for Manticore that are embedded in popular interactive disassemblers. Last summer, former intern Alan Chang worked on the first such interface, the Manticore User Interface (MUI) plugin for Binary Ninja. We found that pairing Manticore directly with an interactive disassembler provides vulnerability researchers with a more convenient way to actually use (and benefit from) symbolic execution. Therefore, during my winter and summer internships at Trail of Bits, my goal was to help grow the MUI ecosystem by making a MUI plugin for Ghidra and building infrastructure to make these plugins easier to use, maintain, and develop.

The Ghidra Plugin

We figured that the most direct way to encourage more people to use MUI plugins would be to simply develop MUI plugins for a greater variety of disassemblers! Thus, I spent my winternship developing a Ghidra version of the MUI plugin; I chose Ghidra chiefly because it is popular and, unlike the commercial tool Binary Ninja, free and open source. Additionally, a few internal projects at Trail of Bits were already using Ghidra, so I would have ample opportunity to explore Ghidra plugin development. Finally, by developing a Ghidra plugin (one written in Java instead of Python), we could develop a solution that wouldn’t be tied to a single programming language, gaining insight that could guide the development of future plugins.

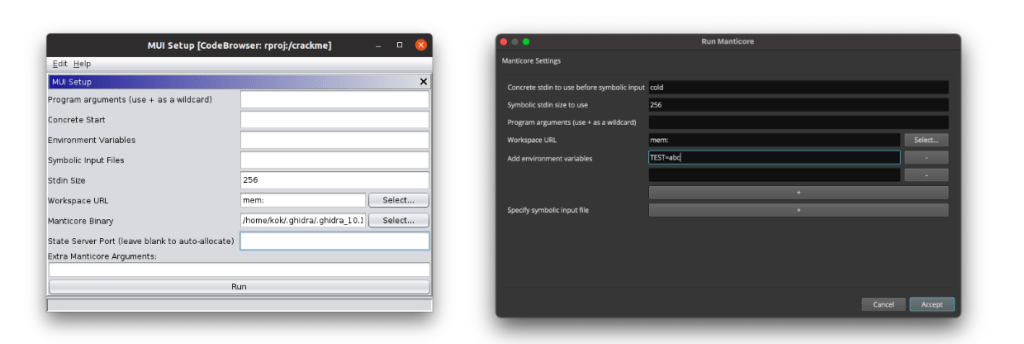

This initial Ghidra plugin mimicked the existing Binary Ninja plugin as closely as possible. While it took a bit of time to become familiar with Java Swing and Ghidra’s widgets, simply mimicking the existing visual components and user interface was a fairly trivial task once I got going.

However, because the Ghidra plugin would be written in Java, it could not depend on the Manticore Python package or directly call Manticore’s Python API. Our solution to that challenge was to use a tool called shiv to seamlessly bundle the Manticore library and all of its dependencies into a Python zipapp. That way, we could create a “batteries-included” Manticore binary and then translate the Binary Ninja plugin’s interactions with the Manticore API into the appropriate command-line arguments. We then placed this binary in the relevant platform-specific subdirectories of Ghidra’s os directory, which facilitates cross-platform support.

By the end of the winternship, I was able to add extra features to the Ghidra plugin, such as the ability to specify arbitrary Manticore arguments in addition to those with dedicated input fields and support for multiple Manticore instances in the same Ghidra session. This, however, brought to light an additional problem.

Feature parity and cross-disassembler development

It quickly became apparent that our approach to plugin development would not be sustainable if we aspired to expand the MUI project to support even more disassemblers. For each new MUI feature, we would first have to determine how to implement the feature, accounting for the way that the plugin interacts with Manticore (e.g., through direct calls to the Manticore API or through Manticore’s command-line interface options). Furthermore, certain front-end information shared across plugins (e.g., fixed description strings or sensible default options) would have to be repeated and standardized in each implementation.

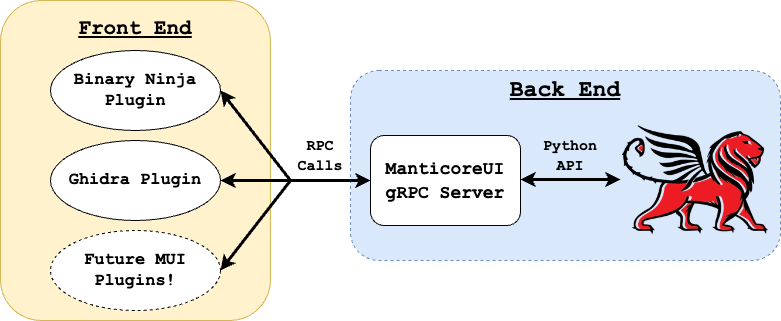

To address this problem, over the summer I developed a centralized remote procedure call (RPC) server binary for MUI. This server handles all interactions with the full-featured Manticore Python API and handles MUI functionality through individual RPCs defined in a protocol buffer. We chose to use gRPC as our RPC framework because of its performance, wide adoption, and strong support for code generation across many programming languages. As a result, MUI plugins of the future can easily contain and depend on their own gRPC-generated code.

The server is written in Python, providing it access to the full functionality of Manticore’s Python API, but is bundled into a shiv binary that can be called in any language. This facilitates a new client-server architecture that allows developers to implement any back-end Manticore functionality and tests just once. Developers of the front-end disassembler plugins can make RPC requests to the server with just a few lines of trivial code, which means that their work on individual plugins can focus almost entirely on front-end/UI changes.

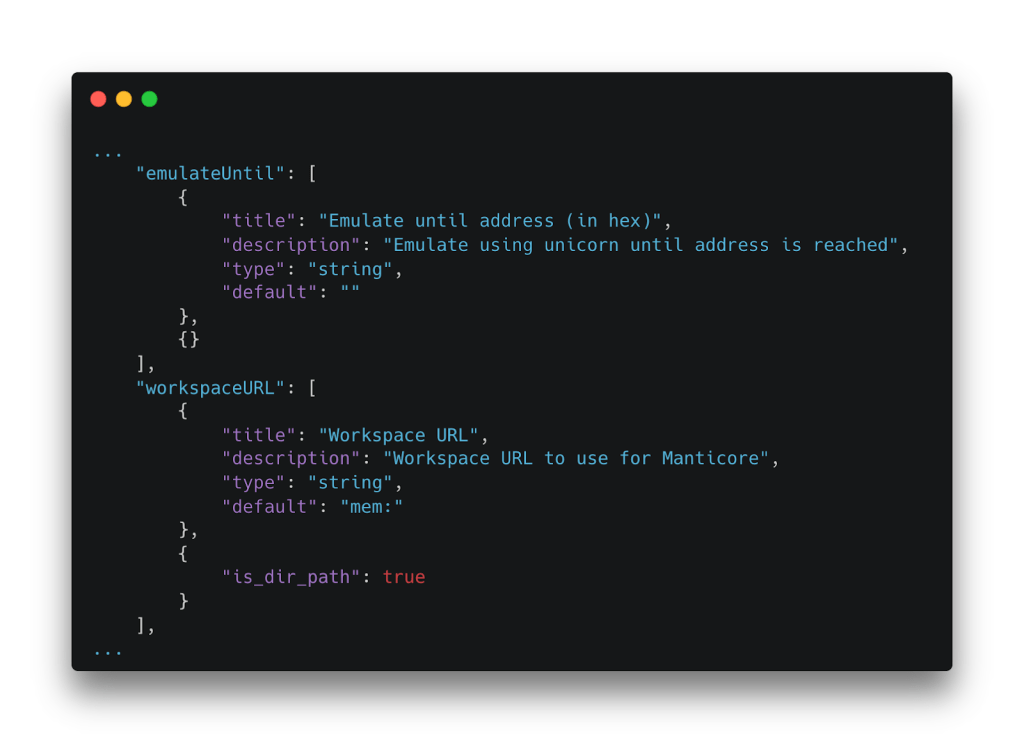

To alleviate the “chore” of handling fixed strings and other front-end information that will be identical across plugins, we can store such data in JSON files that are packaged with MUI plugin releases and loaded on startup. In this way, we can standardize data such as the fields, field descriptors, and default values of the run dialogs used to start Manticore’s execution.

Demo: Developing a Feature for MUI

Let’s take a look at the process of developing a feature for MUI. Suppose that we want to enable manual state management during the runtime of a Manticore instance. Specifically, we want the ability to do the following:

- Pause a state and resume it at a later time, which will be useful if we implement capabilities like execution tracing in the future.

- Kill a state at our discretion. That way, if a state bypasses an

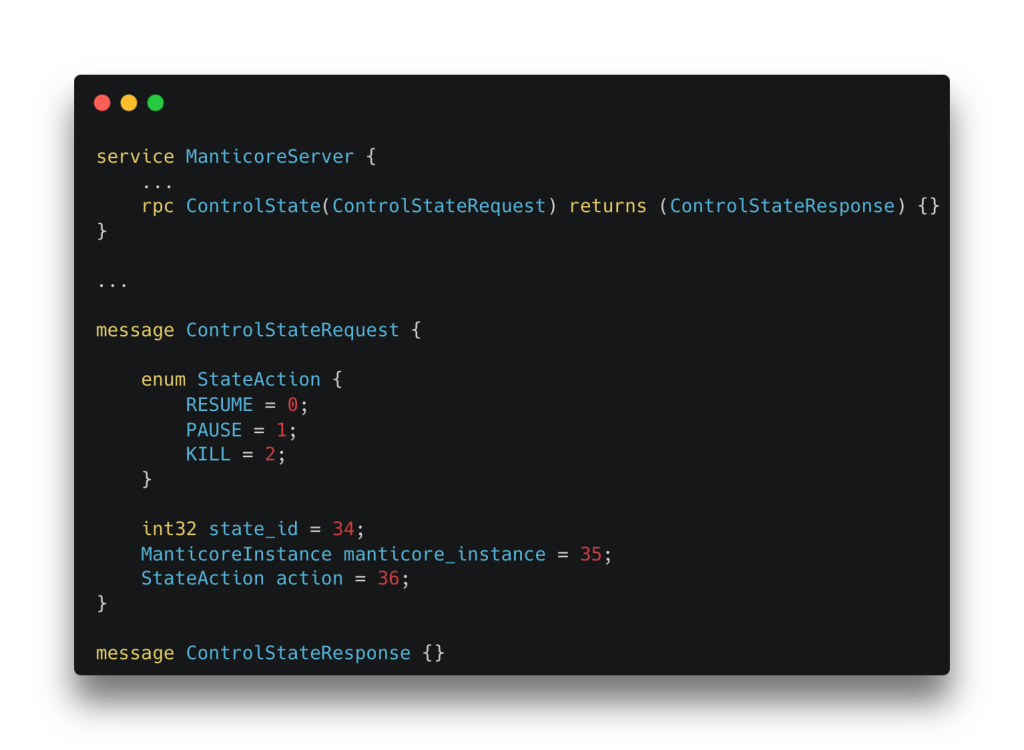

avoidhook or becomes stuck in an infinite loop, we will be able to abandon it.First, we will define a new RPC and the RPC’s message formats in our protocol buffer file. The server will receive the state’s numeric ID, the Manticore instance that we’re working with, and aStateActionenum indicating whether it should resume, pause, or kill the state.

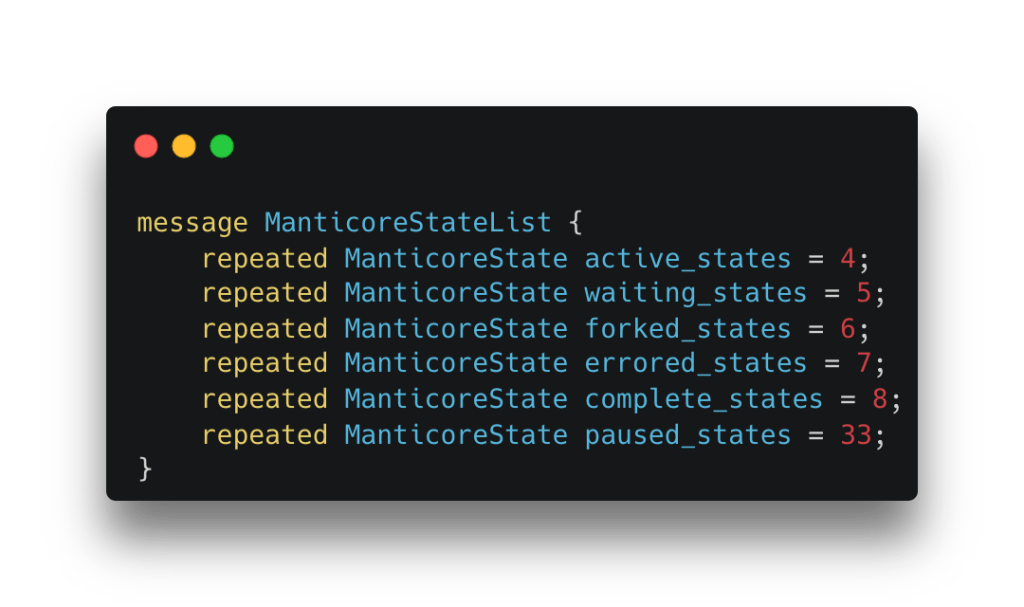

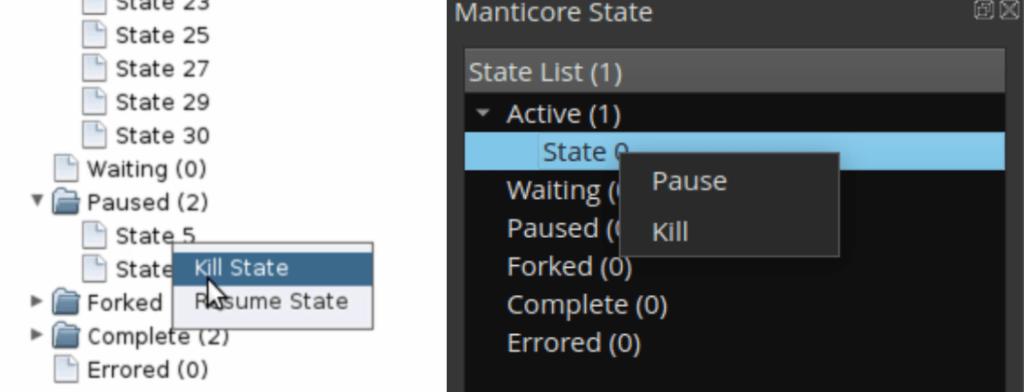

We’ll also need to update an existing message—ManticoreStateList. MUI plugins have state lists that display all states and the status of each state; these lists are updated via a GetStateList RPC. Because it’d be beneficial for users to see “paused” states as distinct from preexisting state statuses, we’ll add a new paused_states field to the RPC’s response message, which will contain a list of the states paused by the user.

With that, we can proceed to generate the Python service interface code and mypy type stubs! During my summer internship, I used the command runner just to abstract away this work for developers, so we can run the just generate command to…just generate the required code!

Now we can move on to implementing the back-end functionality. This code is contained in a single Servicer class in which each method represents an individual RPC.

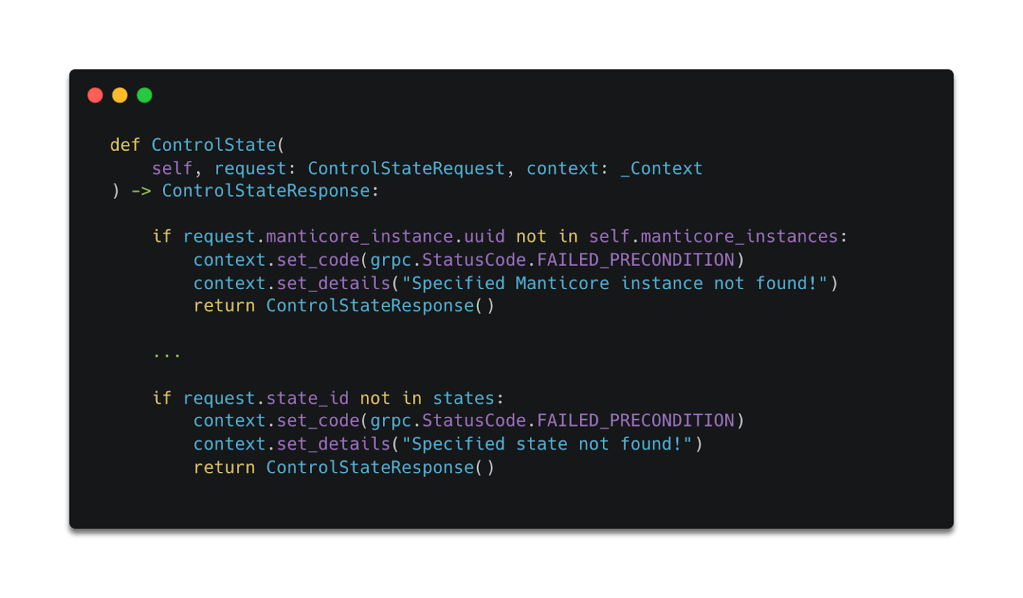

We’ll begin by validating the RPC request data. While gRPC-generated code can check that the fields provided to it are correctly typed, it cannot enforce the use of any fields or verify that fields are well formed. Thus, we’ll write our own checks to assert the validity of the request; we’ll also set an error code and error details to be returned to the requester if the request is invalid.

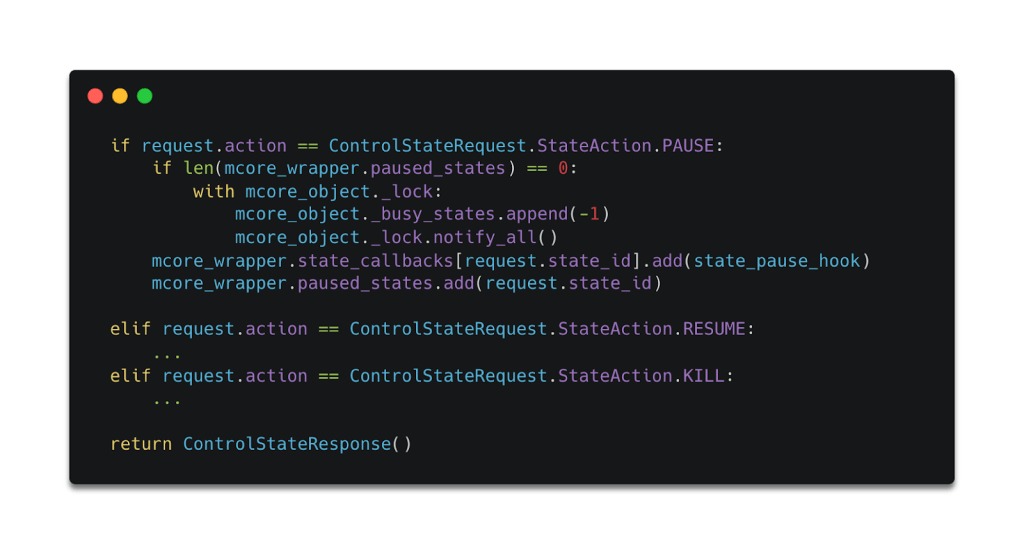

Finally, we can implement the state-pausing and state-killing functionality by directly accessing Manticore’s Python API. This direct access provides us far more control than we’d have if we were interacting with Manticore through its command-line options, enabling us to create a dummy busy state or abandon a specific state, for example. If all goes well, the front-end plugin will receive an empty response, which will be populated with the default OK status code.

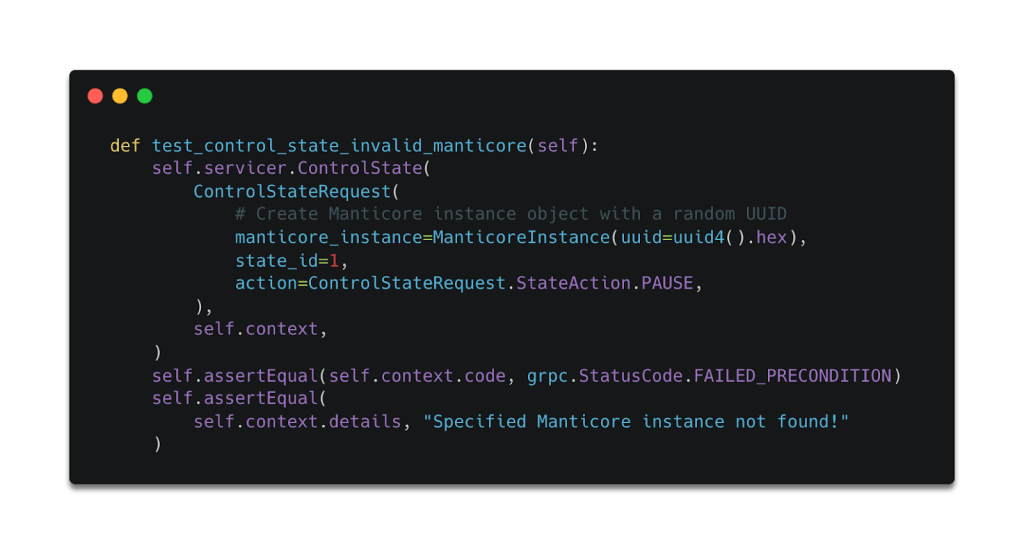

One additional benefit of our approach is that we can write tests for our RPCs without having to deal with spawning up a server and connecting to it. Instead, we can simply call the Servicer’s methods directly and pass in a new Context object, which we can later inspect for error codes.

Once we’re done, we can use our trusty command runner to “just build” the shiv binary, which will give us a fresh server binary that we can use in our plugins.

First, we need to generate the Java service interface code, which will be based on the updated protocol buffer. gRPC maintains a Java library, grpc-java, that will handle this for us.

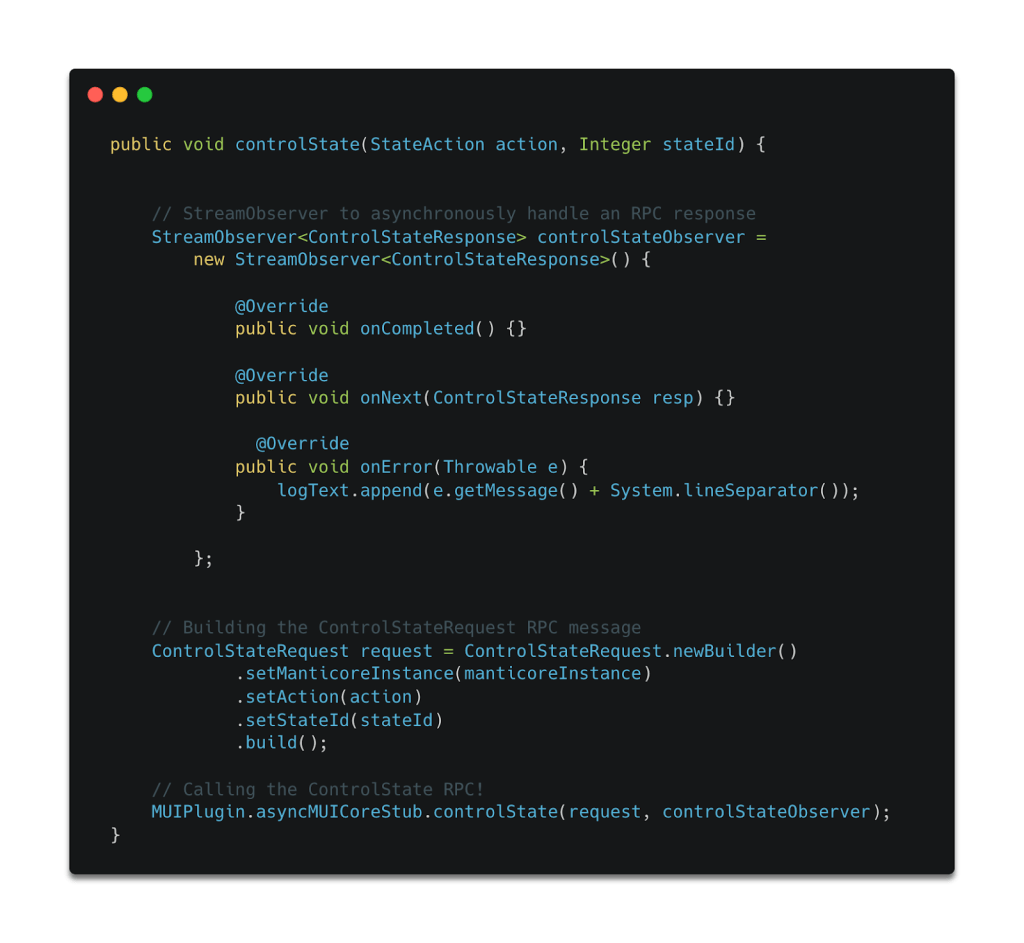

Then, we have to write the function that actually executes the RPC. In our plugins, we encapsulate all such “connector” functions in a single file. In the Ghidra plugin, writing a connector function involves only three steps. First, we create a StreamObserver object to asynchronously handle RPC responses. In this case, we need only implement behavior for handling error cases, since the “results” of successful ControlState RPCs will be available to the user via the GetStateList RPC. Then we build the ControlStateRequest object, populating the fields as required. Lastly, we actually execute the RPC through a method exposed by the gRPC-generated ManticoreServerStub, which conveniently handles all communication with the server for us.

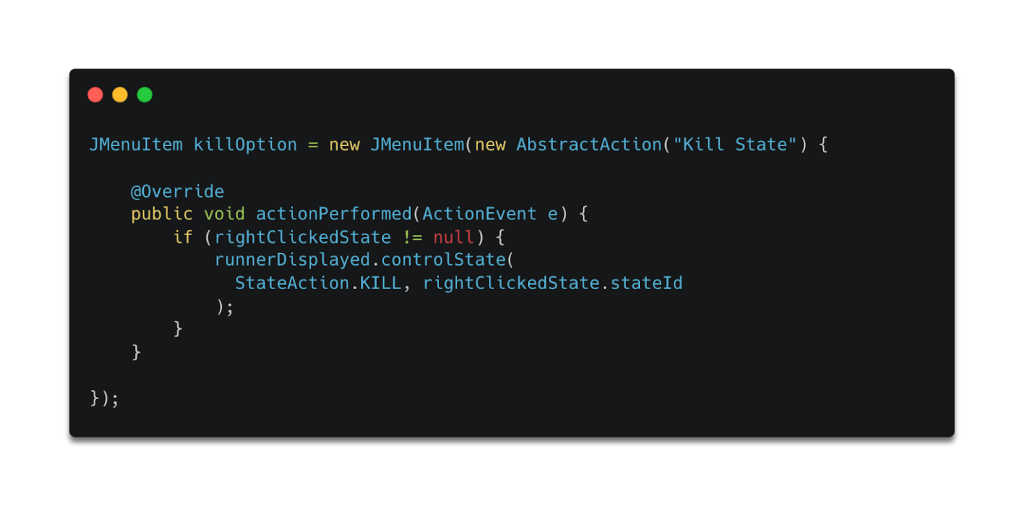

The only thing left to do is to make the appropriate UI changes! For this feature, we can simply open a context menu by right-clicking on a state in the state list and then populate the state with the actions that call the controlState method!

The added code creates a new option in the context menu and calls the “connector” method when that new option is selected.

With the Manticore functionality handled by the gRPC server, the UI changes that we must implement on the “front ends” of the Ghidra and Binary Ninja plugins are fairly straightforward.

The “hypothetical” state management feature discussed in the demo has actually been implemented and is now part of MUI! If you’re interested in seeing all of the changes and commits, check out the pull requests for the server and the Ghidra plugin. ( These pull requests were made before a refactor of where this code lives. The code now lives in the main Manticore repository under the server directory.)

A Quick Step-by-Step Guide

To summarize, the steps for adding a new feature to MUI are as follows:

Start by modifying the MUI Server binary.

- Edit the protocol buffer (the

ManticoreServer.protofile) if new RPCs or modifications to existing messages are required. - Generate service interface code for the server script by running the

just generatecommand. - Add the functionality and the request validation code to the server script (

manticore_server.py), through which one can interact with the Manticore API directly. - Where applicable, write tests for the new functionality.

- Build the shiv server executable by running the

just buildcommand.

Then, for each front-end plugin:

- Use / copy over the new server binary;

- Generate service interface code in the programming language used by the plugin; and

- Make any relevant front-end changes (and, where applicable, share standardized data as part of MUI’s common resources).

Conclusion

My internships at Trail of Bits have been really fulfilling, and I’m proud of how the MUI project has progressed. Having struggled with the seemingly black-box nature of symbolic execution the first few times I tried to apply it in capture-the-flag challenges, I’m confident that the MUI project will make symbolic execution more accessible to beginners. Additionally, integration with well-established interactive disassemblers will make symbolic execution a more natural part of the vulnerability discovery process.

I’m also pleased with how the MUI development experience has progressed. Plugin development isn’t the smoothest experience, in part because the user-facing plugin installation processes aren’t designed for rapid prototyping or incremental changes. Thus, spending my winternship building the Ghidra plugin from scratch was a harrowing experience. On top of familiarizing myself with Java and picking up a new plugin development framework (both of which have their own learning curves), I spent a lot of time thinking about whether adding a certain feature would even be possible! With the new MUI server architecture, I’m now able to spend that time more productively, thinking only about how a new feature could aid in the vulnerability discovery process.

In addition to making development a less time-consuming process, the new MUI server architecture provides dev-centric features that make it a far smoother one too. These include just scripts, Gradle methods, and unit tests. In previous projects, I treated the implementation of such features as a chore; even during my internships, I began adding them to the new server only after some prodding and guidance from my mentor Eric Kilmer. That work, though, made a world of difference in my development speed, the quality of my code, and my level of frustration while debugging!