Devirtualizing C++ with Binary Ninja

In my first blog post, I introduced the general structure of Binary Ninja’s Low Level IL (LLIL), as well as how to traverse and manipulate it with the Python API. Now, we’ll do something a little more interesting.

Reverse engineering binaries compiled from object-oriented languages can be challenging, particularly when it comes to virtual functions. In C++, invoking a virtual function involves looking up a function pointer in a virtual table (vtable) and then making an indirect call. In the disassembly, all you see is something like mov rax, [rcx+0x18]; call rax. If you want to know what function it will call for a given class object, you have to find the virtual table and then figure out which function pointer is at that offset.

Or you could use this plugin!

An Example Plugin: Navigating to a Virtual Function

vtable-navigator.py is an example plugin that will navigate to a given class’s virtual function from a call instruction. First, the plugin uses the LLIL to identify a specified class’s vtable when it is referenced in a constructor. Next, it will preprocess the call instruction’s basic block to track register assignments and their corresponding LLIL expressions. Finally, the plugin will process the LowLevelILInstruction object of the call and calculate the offset of the function to be called by recursively visiting register assignment expressions.

Discovering the vtable Pointer

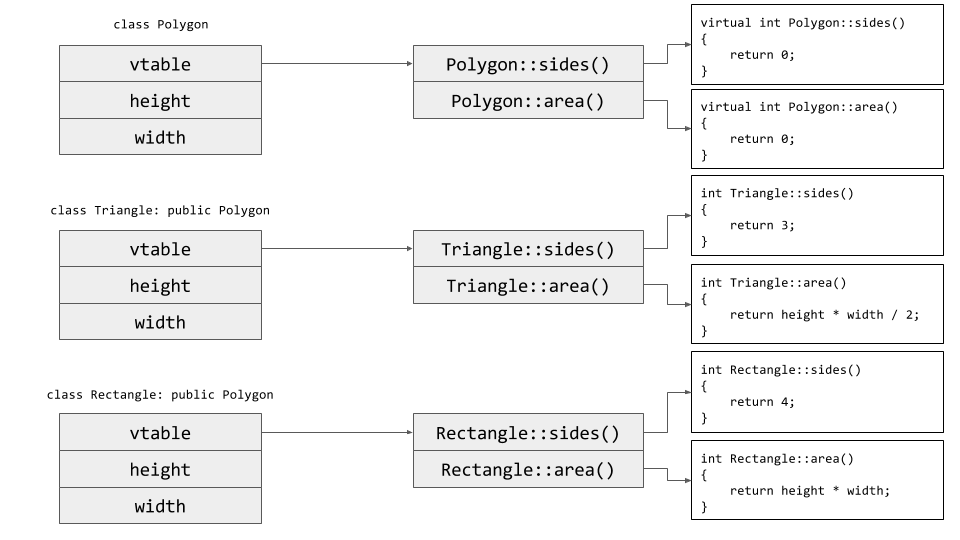

Figure 1: Two classes inherit from a base virtual class. Each class’s virtual table points to its respective implementation of the virtual functions.

In the simplest form, a class constructor stores a pointer to its vtable in the object’s structure in memory. The two most common ways that a vtable pointer can be stored are either directly referencing a hardcoded value for the vtable pointer or storing the vtable pointer in a register, then copying that register’s value into memory. Thus, if we look for a write to a memory address from a register with no offset, then it’s probably the vtable.

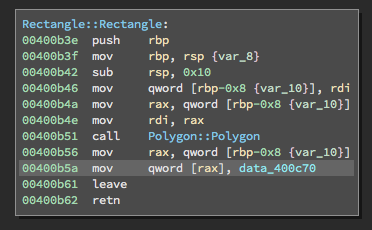

An example constructor. The highlighted instruction stores a vtable in the object’s structure.

We can detect the first kind of vtable pointer assignment by looking for an LLIL instruction in the constructor’s LowLevelILFunction object (as described in Part 1) that stores a constant value to a memory address contained in a register.

According to the API, an LLIL_STORE instruction has two operands: dest and src. Both are LLIL expressions. For this case, we are looking for a destination value provided by a register, so dest should be an LLIL_REG expression. The value to be stored is a constant, so src should be an LLIL_CONST expression. If we match this pattern, then we assume that the constant is a vtable pointer, read the value pointed to by the constant (i.e. il.src.value), and double check that there’s a function pointer there, just to make sure it’s actually a vtable.

# If it's not a memory store, then it's not a vtable.

if il.operation != LowLevelILOperation.LLIL_STORE:

continue

# vtable is referenced directly

if (il.dest.operation == LowLevelILOperation.LLIL_REG and

il.src.operation == LowLevelILOperation.LLIL_CONST):

fp = read_value(bv, il.src.value, bv.address_size)

if not bv.is_offset_executable(fp):

continue

return il.src.valuePretty straight forward, but let’s look at the second case, where the value is first stored in a register.

For this case, we search for instructions where the dest and src operands of an LLIL_STORE are both LLIL_REG expressions. Now we need to determine the location of the virtual table based only on the register.

This is where things get cool. This situation not only demonstrates usage of the LLIL, but how powerful the dataflow analysis performed on the LLIL can be. Without dataflow analysis, we would have to parse this LLIL_STORE instruction, figure out which register is being referenced, and then step backwards to find the last value assigned to that register. With the dataflow analysis, the register’s current value is readily available with a single call to get_reg_value_at_low_level_il_instruction.

# vtable is first loaded into a register, then stored

if (il.dest.operation == LowLevelILOperation.LLIL_REG and

il.src.operation == LowLevelILOperation.LLIL_REG):

reg_value = src_func.get_reg_value_at_low_level_il_instruction(

il.instr_index, il.src.src

)

if reg_value.type == RegisterValueType.ConstantValue:

fp = read_value(bv, reg_value.value, bv.address_size)

if not bv.is_offset_executable(fp):

continue

return reg_value.valuePropagation of Register Assignments

Now that we know the location of the vtable, let’s figure out which offset is called. To determine this value, we need to trace back through the state of the program from the call instruction to the moment the vtable pointer is retrieved from memory, calculate the offset into the virtual table, and discover which function is being called. We accomplish this tracing by implementing a rudimentary dataflow analysis that preprocesses the basic block containing the call instruction. This preprocessing step will let us query the state of a register at any point in the basic block.

def preprocess_basic_block(bb):

defs = {}

current_defs = {}

for instr in bb:

defs[instr.instr_index] = copy(current_defs)

if instr.operation == LowLevelILOperation.LLIL_SET_REG:

current_defs[instr.dest] = instr

elif instr.operation == LowLevelILOperation.LLIL_CALL:

# wipe out previous definitions since we can't

# guarantee the call didn't modify registers.

current_defs.clear()

return defsAt each instruction of the basic block, we keep a table of register states. As we iterate over each LowLevelILInstruction, this table is updated when an LLIL_SET_REG operation is encountered. For each register tracked, we store the LowLevelILInstruction responsible for changing its value. Later, we can query this register’s state and retrieve the LowLevelILInstruction and recursively query the value of the src operand, which is the expression the register currently represents.

Additionally, if an LLIL_CALL operation is encountered, then we clear the register state from that point on. The called function might modify the registers, and so it is safest to assume that all registers after the call have unknown values.

Now we have all the data that we need to model the vtable pointer dereference and calculate the virtual function offset.

Calculating the Virtual Function Offset

Before diving into the task of calculating the offset, let’s consider how we can model the behavior. Looking back at Figure 1, dispatching a virtual function can be generalized into four steps:

- Read a pointer to the vtable from the object’s structure in memory (

LLIL_LOAD). - Add an offset to the pointer value, if the function to be dispatched is not the first function (

LLIL_ADD). - Read the function pointer at the calculated offset (

LLIL_LOAD). - Call the function (

LLIL_CALL).

Dispatching a virtual function can therefore be modeled by evaluating the src operand expression of the LLIL_CALL instruction, recursively visiting each expression. The base case of the recursion is reached when the LLIL_LOAD instruction of step 1 is encountered. The value of that LLIL_LOAD is the specified vtable pointer. The vtable pointer value is returned and propagates back through the previous iterations of the recursion to be used in those iterations’ evaluations.

Let’s step through the evaluation of an example, to see how the model works and how it is implemented in Python. Take the following virtual function dispatch in x86.

mov eax, [ecx] ; retrieve vtable pointer call [eax+4] ; call the function pointer at offset 4 of the vtable

This assembly would be translated into the following LLIL.

0: eax = [ecx].d 1: call ([eax + 4].d)

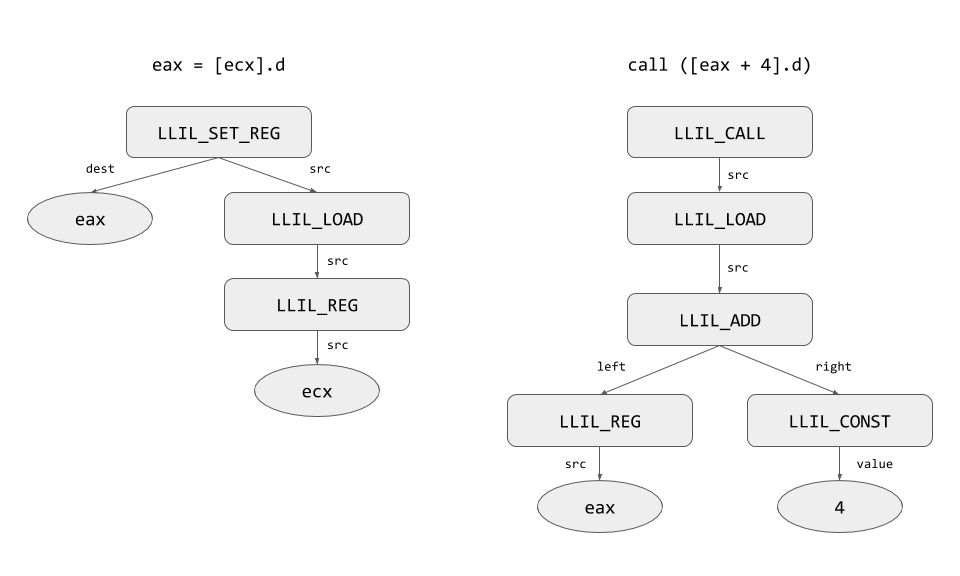

Building out the trees for these two LLIL instructions yields the following structures.

Figure 2: LLIL tree structures for the example vtable dispatch assembly.

The src operand of the LLIL_CALL is an LLIL_LOAD expression. We evaluate the src operand of the LLIL_CALL with a handler based on its operation.

# This lets us handle expressions in a more generic way. # operation handlers take the following parameters: # vtable (int): the address of the class's vtable in memory # bv (BinaryView): the BinaryView passed into the plugin callback # expr (LowLevelILInstruction): the expression to handle # current_defs (dict): The current state of register definitions # defs (dict): The register state table for all instructions # load_count (int): The number of LLIL_LOAD operations encountered operation_handler = defaultdict(lambda: (lambda *args: None)) operation_handler[LowLevelILOperation.LLIL_ADD] = handle_add operation_handler[LowLevelILOperation.LLIL_REG] = handle_reg operation_handler[LowLevelILOperation.LLIL_LOAD] = handle_load operation_handler[LowLevelILOperation.LLIL_CONST] = handle_const

Therefore, the first iteration of our recursive evaluation of this virtual function dispatch is a call to handle_load.

def handle_load(vtable, bv, expr, current_defs, defs, load_count):

load_count += 1

if load_count == 2:

return vtable

addr = operation_handler[expr.src.operation](

vtable, bv, expr.src, current_defs, defs, load_count

)

if addr is None:

return

# Read the value at the specified address.

return read_value(bv, addr, expr.size)handle_load first increments a count of LLIL_LOAD expressions encountered. Recall that our model for dereferencing a vtable expects two LLIL_LOAD instructions: the vtable pointer, then the function pointer we want. Tracing backwards through the program state means that we will encounter the load of the function pointer first and the load for the vtable pointer second. The count is 1 at the moment, so the recursion should not yet be terminated. Instead, the src operand of the LLIL_LOAD is recursively evaluated by a handler function for the src expression. When this call to a handler completes, addr should contain the address that points to the function pointer to be dispatched. In this case, src is an LLIL_ADD, so handle_add is called.

def handle_add(vtable, bv, expr, current_defs, defs, load_count):

left = expr.left

right = expr.right

left_value = operation_handler[left.operation](

vtable, bv, left, current_defs, defs, load_count

)

right_value = operation_handler[right.operation](

vtable, bv, right, current_defs, defs, load_count

)

if None in (left_value, right_value):

return None

return left_value + right_valuehandle_add recursively evaluates both the left and right sides of the LLIL_ADD expression and returns the sum of these values back to its caller. In our example, the left operand is an LLIL_REG expression, so handle_reg is called.

def handle_reg(vtable, bv, expr, current_defs, defs, load_count):

# Retrieve the LLIL expression that this register currently

# represents.

set_reg = current_defs.get(expr.src, None)

if set_reg is None:

return None

new_defs = defs.get(set_reg.instr_index, {})

return operation_handler[set_reg.src.operation](

vtable, bv, set_reg.src, new_defs, defs, load_count

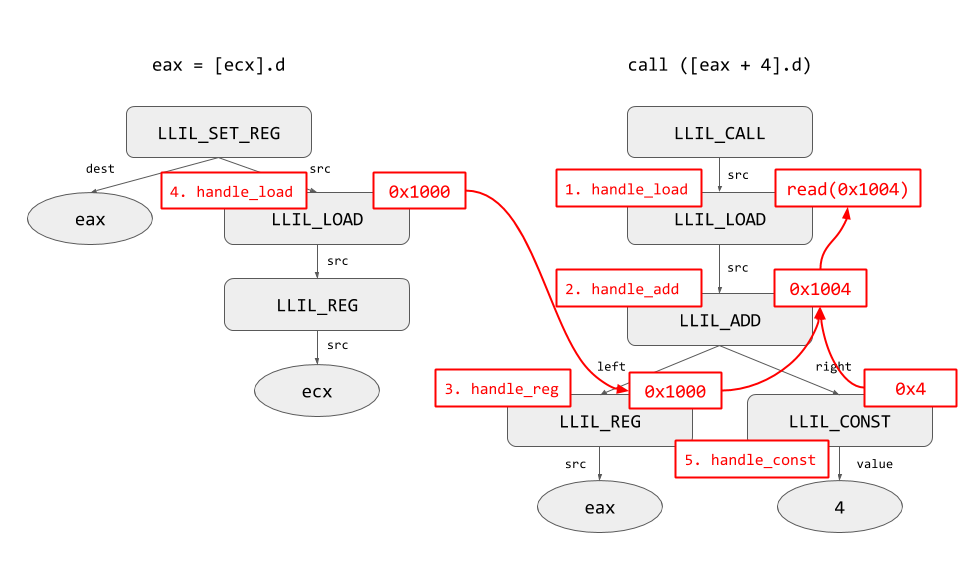

)This is where our dataflow analysis comes into play. Using the current register state, as described by current_defs, we identify the LLIL expression that represents the current value of this LLIL_REG expression. Based on the example above, current_defs[‘eax’] would be the expression [ecx].d. This is another LLIL_LOAD expression, so handle_load is called again. This time, load_count is incremented to 2, which meets the base case. If we assume that in our example the user chose a class whose constructor is located at 0x1000, then handle_load will return the value 0x1000.

With the left operand evaluated, it is now time for handle_add to evaluate the right operand. This expression is an LLIL_CONST, which is very easy to evaluate; we just return the value operand of the expression. With both left and right operands evaluated, handle_add returns the sum of the expression, which is 0x1004. handle_load receives the return value from handle_add, then reads the function pointer located at that address from the BinaryView. We can then change the currently displayed function by calling bv.file.navigate(bv.file.view, function_pointer) in the BinaryView object.

Returning to the LLIL tree structures earlier, we can annotate the structures to visualize how the recursion and concrete data propagation happens.

Figure 3: LLIL tree structures for the example vtable dispatch assembly, annotated with handler calls and propagation of concrete values back up the call chain.

Example: Rectangles and Triangles

For a real world example, I used a slightly modified version of this C++ tutorial, which you can find here. Here’s a demonstration of the plugin in action:

If you compile virtual-test.cpp for both x86-64 and ARM and open the binaries in Binary Ninja with the plugin installed, you will find that it will work on both architectures without needing any architecture-specific code. That’s the beauty of an intermediate representation!

Go Forth and Analyze

Binary Ninja’s LLIL is a powerful feature that enables cross-platform program analysis to be easily developed. As we’ve seen, its structure is simple, yet allows for representation of even the most complex instructions. The Python API is a high quality interface that we can use effectively to traverse instructions and process operations and operands with ease. More importantly, we’ve seen simple examples of how dataflow analysis, enabled by the LLIL, can allow us to develop cross-platform plugins to perform program analysis without having to implement complicated heuristics to calculate program values. What are you waiting for? Pick up a copy of Binary Ninja and start writing your own binary analysis with the LLIL, and don’t forget to attend Sophia’s presentation of “Next-level Static Analysis for Vulnerability Research” using the Binary Ninja LLIL at INFILTRATE 2017.