Apple can comply with the FBI court order

Earlier today, a federal judge ordered Apple to comply with the FBI’s request for technical assistance in the recovery of the San Bernadino gunmen’s iPhone 5C. Since then, many have argued whether these requests from the FBI are technically feasible given the support for strong encryption on iOS devices. Based on my initial reading of the request and my knowledge of the iOS platform, I believe all of the FBI’s requests are technically feasible.

The FBI’s Request

In a search after the shooting, the FBI discovered an iPhone belonging to one of the attackers. The iPhone is the property of the San Bernardino County Department of Public Health where the attacker worked and the FBI has permission to search it. However, the FBI has been unable, so far, to guess the passcode to unlock it. In iOS devices, nearly all important files are encrypted with a combination of the phone passcode and a hardware key embedded in the device at manufacture time. If the FBI cannot guess the phone passcode, then they cannot recover any of the messages or photos from the phone.

There are a number of obstacles that stand in the way of guessing the passcode to an iPhone:

- iOS may completely wipe the user’s data after too many incorrect PINs entries

- PINs must be entered by hand on the physical device, one at a time

- iOS introduces a delay after every incorrect PIN entry

As a result, the FBI has made a request for technical assistance through a court order to Apple. As one might guess, their requests target each one of the above pain points. In their request, they have asked for the following:

- [Apple] will bypass or disable the auto-erase function whether or not it has been enabled;

- [Apple] will enable the FBI to submit passcodes to the SUBJECT DEVICE for testing electronically via the physical device port, Bluetooth, Wi-Fi, or other protocol available on the SUBJECT DEVICE; and

- [Apple] will ensure that when the FBI submits passcodes to the SUBJECT DEVICE, software running on the device will not purposefully introduce any additional delay between passcode attempts beyond what is incurred by Apple hardware.

In plain English, the FBI wants to ensure that it can make an unlimited number of PIN guesses, that it can make them as fast as the hardware will allow, and that they won’t have to pay an intern to hunch over the phone and type PIN codes one at a time for the next 20 years — they want to guess passcodes from an external device like a laptop or other peripheral.

As a remedy, the FBI has asked for Apple to perform the following actions on their behalf:

[Provide] the FBI with a signed iPhone Software file, recovery bundle, or other Software Image File (“SIF”) that can be loaded onto the SUBJECT DEVICE. The SIF will load and run from Random Access Memory (“RAM”) and will not modify the iOS on the actual phone, the user data partition or system partition on the device’s flash memory. The SIF will be coded by Apple with a unique identifier of the phone so that the SIF would only load and execute on the SUBJECT DEVICE. The SIF will be loaded via Device Firmware Upgrade (“DFU”) mode, recovery mode, or other applicable mode available to the FBI. Once active on the SUBJECT DEVICE, the SIF will accomplish the three functions specified in paragraph 2. The SIF will be loaded on the SUBJECT DEVICE at either a government facility, or alternatively, at an Apple facility; if the latter, Apple shall provide the government with remote access to the SUBJECT DEVICE through a computer allowed the government to conduct passcode recovery analysis.

Again in plain English, the FBI wants Apple to create a special version of iOS that only works on the one iPhone they have recovered. This customized version of iOS (ahem FBiOS) will ignore passcode entry delays, will not erase the device after any number of incorrect attempts, and will allow the FBI to hook up an external device to facilitate guessing the passcode. The FBI will send Apple the recovered iPhone so that this customized version of iOS never physically leaves the Apple campus.

As many jailbreakers are familiar, firmware can be loaded via Device Firmware Upgrade (DFU) Mode. Once an iPhone enters DFU mode, it will accept a new firmware image over a USB cable. Before any firmware image is loaded by an iPhone, the device first checks whether the firmware has a valid signature from Apple. This signature check is why the FBI cannot load new software onto an iPhone on their own — the FBI does not have the secret keys that Apple uses to sign firmware.

Enter the Secure Enclave

Even with a customized version of iOS, the FBI has another obstacle in their path: the Secure Enclave (SE). The Secure Enclave is a separate computer inside the iPhone that brokers access to encryption keys for services like the Data Protection API (aka file encryption), Apple Pay, Keychain Services, and our Tidas authentication product. All devices with TouchID (or any devices with A7 or later A-series processors) have a Secure Enclave.

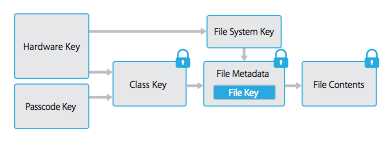

When you enter a passcode on your iOS device, this passcode is “tangled” with a key embedded in the SE to unlock the phone. Think of this like the 2-key system used to launch a nuclear weapon: the passcode alone gets you nowhere. Therefore, you must cooperate with the SE to break the encryption. The SE keeps its own counter of incorrect passcode attempts and gets slower and slower at responding with each failed attempt, all the way up to 1 hour between requests. There is nothing that iOS can do about the SE: it is a separate computer outside of the iOS operating system that shares the same hardware enclosure as your phone.

As a result, even a customized version of iOS cannot influence the behavior of the Secure Enclave. It will delay passcode attempts whether or not that feature is turned on in iOS. Private keys cannot be read out of the Secure Enclave, ever, so the only choice you have is to play by its rules.

Apple has gone to great lengths to ensure the Secure Enclave remains safe. Many consumers became familiar with these efforts after “Error 53” messages appeared due to 3rd party replacement or tampering with the TouchID sensor. iPhones are restricted to only work with a single TouchID sensor via device-level pairing. This security measure ensures that attackers cannot build a fraudulent TouchID sensor that brute-forces fingerprint authentication to gain access to the Secure Enclave.

For more information about the Secure Enclave and Passcodes, see pages 7 and 12 of the iOS Security Guide.

The Devil is in the Details

“Why not simply update the firmware of the Secure Enclave too?” I initially speculated that the private data stored within the SE was erased on updates, but I now believe this is not true. Apple can update the SE firmware, it does not require the phone passcode, and it does not wipe user data on update. Apple can disable the passcode delay and disable auto erase with a firmware update to the SE. After all, Apple has updated the SE with increased delays between passcode attempts and no phones were wiped.

If the device lacks a Secure Enclave, then a single firmware update to iOS will be sufficient to disable passcode delays and auto erase. If the device does contain a Secure Enclave, then two firmware updates, one to iOS and one to the Secure Enclave, are required to disable these security features. The end result in either case is the same. After modification, the device is able to guess passcodes at the fastest speed the hardware supports.

The recovered iPhone is a model 5C. The iPhone 5C lacks TouchID and, therefore, lacks a Secure Enclave. The Secure Enclave is not a concern. Nearly all of the passcode protections are implemented in software by the iOS operating system and are replaceable by a single firmware update.

The End Result

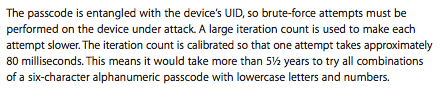

There are still caveats in these older devices and a customized version of iOS will not immediately yield access to the phone passcode. Devices with A6 processors, such as the iPhone 5C, also contain a hardware key that cannot ever be read. This key is also “tangled” with the phone passcode to create the encryption key. However, there is nothing that stops iOS from querying this hardware key as fast as it can. Without the Secure Enclave to play gatekeeper, this means iOS can guess one passcode every 80ms.

Even though this 80ms limit is not ideal, it is a massive improvement from guessing only one passcode per hour with unmodified software. After the elimination of passcode delays, it will take a half hour to recover a 4-digit PIN, hours to recover a 6-digit PIN, or years to recover a 6-character alphanumeric password. It has not been reported whether the recovered iPhone uses a 4-digit PIN or a longer, more complicated alphanumeric passcode.

Festina Lente

Apple has allegedly cooperated with law enforcement in the past by using a custom firmware image that bypassed the passcode lock screen. This simple UI hack was sufficient in earlier versions of iOS since most files were unencrypted. However, since iOS 8, it has become the default for nearly all applications to encrypt their data with a combination of the phone passcode and the hardware key. This change necessitates guessing the passcode and has led directly to this request for technical assistance from the FBI.

I believe it is technically feasible for Apple to comply with all of the FBI’s requests in this case. On the iPhone 5C, the passcode delay and device erasure are implemented in software and Apple can add support for peripheral devices that facilitate PIN code entry. In order to limit the risk of abuse, Apple can lock the customized version of iOS to only work on the specific recovered iPhone and perform all recovery on their own, without sharing the firmware image with the FBI.

For more information, please listen to my interview with the Risky Business podcast.

- Update 1: Apple has issued a public response to the court order.

- Update 2: Software updates to the Secure Enclave are unlikely to erase user data. Please see the Secure Enclave section for further details.

- Update 3: Reframed “The Devil is in the Details” section and noted that Apple can equally subvert the security measures of the iPhone 5C and later devices that include the Secure Enclave via software updates.