Hacking for Charity: Automated Bug-finding in LibOTR

At the end of last year, we had some free time to explore new and interesting uses of the automated bug-finding technology we developed for the DARPA Cyber Grand Challenge. While the rest of the competitors are quietly preparing for the CGC Final Event, we can entertain you with tales of running our bug-finding tools against real Linux applications.

Like many good stories, this one starts with a bet:

On November 4, 2014, Thomas Ptacek (of Starfighter) bet Matthew Green (of Johns Hopkins) that libotr, a popular library used in secure messaging software, would have a high severity (e.g. remote code execution, information disclosure) bug in the next 12 months. Here at Trail of Bits, we like a good wager, especially when the proceeds go to charity. And we just happened to have an automated bug-finding system laying around, itching for something to do. The temptation was too much to resist: we decided to use our automated bug-finding system from the Cyber Grand Challenge to look for bugs in libotr.

Before we go on, we should state that this was not a security audit. We simply wanted to test how well our automated bug-finding system works on real Linux software and maybe win some money for charity.

We successfully enhanced our bug-finding system to support the libotr library and tested it extensively. Our system confirmed that there were no critical bugs in code paths that we tested; since no one else reported any bugs, the bet ended with Matthew Green donating $1000 to Partners in Health.

Read on to discover the challenges encrypted communications systems present for automated testing, how we solved them, and our testing methodology. Of course, just because our system didn’t find bugs in libotr does not mean that libotr is bug-free.

Background

The automated bug-finding system, known as a Cyber Reasoning System (CRS), that we built for the Cyber Grand Challenge operates on binary code for the DECREE operating system. While DECREE is based on Linux, it differs considerably from plain Linux. DECREE has no signals, no shared memory, no threads, no sockets, no files, and only seven system calls. This means that DECREE is not binary or source compatible with Linux libraries like libotr.

After weighing our options, we decided the easiest and fastest way to test libotr was to port it to DECREE, instead of adding full Linux support to our CRS. We attempted the port in a generic manner, to ensure we could use the lessons learned to test future Linux software.

To port libotr, we had to solve two major issues: shared library dependencies (libotr depends on libgpgerror and libgcrypt) and libc support. We used LLVM to solve both problems at once. First, we used whole-program-llvm to compile libotr and all dependencies to LLVM bitcode. We then merged all the shared libraries at the bitcode level, and aggressively optimized the resulting bitcode. In one move, we eliminated the need for shared libraries, and drastically reduced the amount of libc we’d have to implement, because unused libc calls were optimized out of the resulting bitcode. To build a libc that works on DECREE, we combined libc implementations from the challenge binaries, stubbed functions that don’t make sense in DECREE, and created new implementations based on DECREE calls where appropriate.

Automated Testing

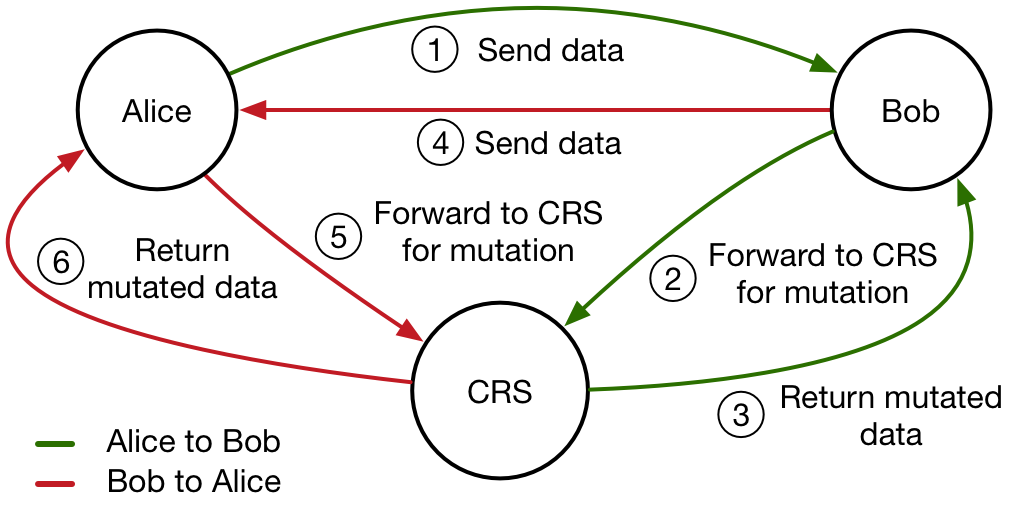

Encrypted communications applications are, by design, difficult to automatically audit. This makes perfect sense: if an automated system can reason how ciphertext relates to plaintext, the encrypted communication system is already broken. These systems are also difficult to audit by random testing (e.g. fuzzing), because recipients will verify the integrity of every message. Typically when testing encrypted systems, the encryption is turned off (or data is manipulated prior to encryption or after decryption). We wanted to simulate testing a black-box binary, so we did not modify libotr in any way. Instead, we thought the best path was to make our CRS simulate a man-in-the-middle (MITM) attack. Because we tested an unmodified libotr, our CRS could not effectively attack code past message integrity checks. However, there was still much in the way of attack surface: message control data, headers, and possibility of flaws in decryption/authentication code. The problem was that our CRS was not designed to MITM. We instead architected the test application (not libotr) to be easier to attack, which results in the convoluted architecture below.

The CRS acts as a man-in-the-middle between two applications communicating using libotr.

Creating the test application was more difficult than porting libotr to DECREE. The porting process was fairly straightforward and took about two weeks. The sample application took a bit longer, and was a much more frustrating experience: the official libotr distribution has no sample code, and the documentation leaves a lot to be desired.

Our testing was limited by the features of libotr exercised by our sample application (for instance, it doesn’t use SMP), and by the unusual test application we created. Additionally, some vulnerabilities may only occur after decryption, and modification of encrypted and authenticated data will never trigger these bugs.

Results

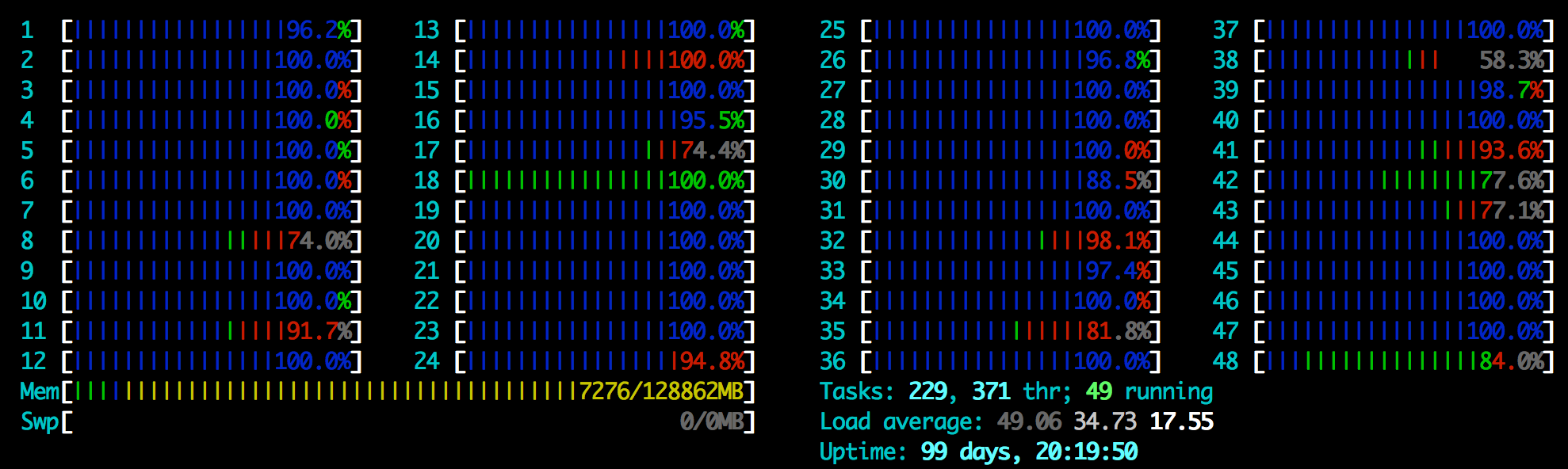

The results of testing libotr are very encouraging. We ran 48 Xeon CPUs for 24 hours against our libotr sample application, and did not identify any memory safety violations.

The CRS acts as a man-in-the-middle between two applications communicating using libotr.

This negative result does not mean that libotr is bug free. We only tested a subset of libotr, and there are considerable parts that our CRS never audited. The lack of obvious bugs is however a very good sign.

Conclusion

The timeframe of the libotr bet has expired without any reported high severity vulnerabilities. We audited parts of libotr with our automated bug-finding tools, and also didn’t find memory corruption vulnerabilities. In the process of setting up this test, we learned how to port Linux applications to DECREE and verified that our CRS can identify real bugs in Linux programs. Better documentation, tests, and sample applications that exercise every libotr feature would simplify both automated and manual auditing. For this experiment we constrained ourselves to an unmodified libotr. We are planning a future test where we modify libotr to enable easier automated testing.